|

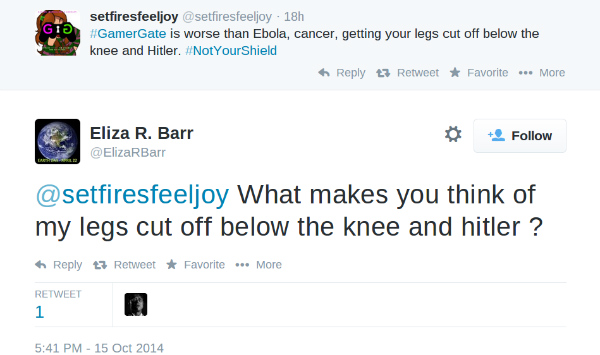

11/10/2023 0 Comments Eliza therapy botBut when I conduct this test today, all of my testers (technical-skilled) realized that this is just a virtual psychotherapist. I don’t know if this is just a matter of the time, when people couldn't imagine a computer to be so eloquent. A number of individuals attributed human-like feelings to the computer program, including Weizenbaum’s secretary. However, many early users were convinced of Eliza’s intelligence and understanding.

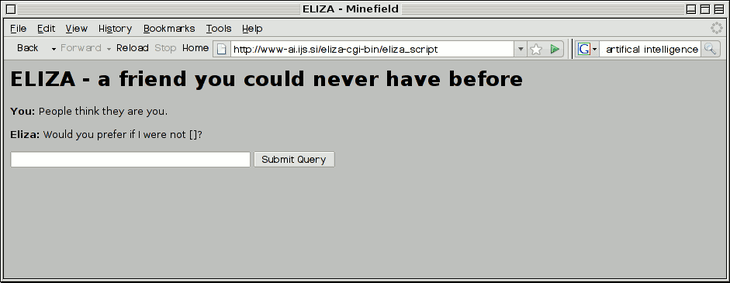

This works surprisingly well even though there is no AI (artificial intelligence) or machine learning. But again, these are the speaker's contribution to the conversation." (Source: )Įliza simulated conversation by using a “pattern matching” and substitution methodology that gave users an illusion of understanding on the part of the program. The speaker further defends his impression (which even in real life may be illusory) by attributing to his conversational partner all sorts of background knowledge, insights and reasoning ability. In any case, it has a crucial psychological utility in that it serves the speaker to maintain his sense of being heard and understood. Whether it is realistic or not is an altogether separate question. It is important to note that this assumption is one made by the speaker. If, for example, one were to tell a psychiatrist "I went for a long boat ride" and he responded "Tell me about boats", one would not assume that he knew nothing about boats, but that he had some purpose in so directing the subsequent conversation. This mode of conversation was chosen because the psychiatric interview is one of the few examples of categorized dyadic natural language communication in which one of the participating pair is free to assume the pose of knowing almost nothing of the real world. ELIZA performs best when its human correspondent is initially instructed to "talk" to it, via the typewriter of course, just as one would to a psychiatrist. "At this writing, the only serious ELIZA scripts which exist are some which cause ELIZA to respond roughly as would certain psychotherapists (Rogerians).

It is limited in scope to the psychiatric use-case: Furthermore, the bot does not need a database of real-world knowledge nor a concrete thread apart from psychiatric issues. Interestingly, it followed some of the fundamental design principles we still use in conversation design for chatbots and voice-services: For instance, the conversation uses turn-taking. The computer scientist Joseph Weizenbaum (8 January 1923 – 5 March 2008) developed it to demonstrate that communication between man and machine is superficial. Eliza is considered to be the first chatbot, published many years ago in 1966.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed